All topics

When a user requests a file from a particular website, the website uses lossy compression to send the file to the user over the internet.

Discuss how this use of lossy compression might affect the user's experience.

Outline the functions of each of these three processes: crawling, indexing, searching.

Explain why the PageRank algorithm might discriminate against new websites.

Explain how a search engine is able to maintain an up-to-date index when the web is continually expanding.

The internet and World Wide Web are often considered to be the same, or the terms are used in the wrong context.

Many organizations produce computer-based solutions that implement open standards.

A search engine is software that allows a user to search for information. The most commonly used search algorithms are the PageRank and HITS algorithms.

Distinguish between the internet and the World Wide Web.

Outline two advantages of using open standards.

Outline why a search engine using the HITS algorithm might produce different page ranking from one using the PageRank algorithm.

Web crawlers browse the World Wide Web.

Explain how data stored in a meta-tag is used by a web crawler.

A web application (app) runs on mobile devices such as smartphones and tablets. It allows users to locate their position in real time on a map, as they walk around a city, as well as the surrounding attractions. The app uses icons to represent tourist attractions such as art galleries and museums. When the user clicks on the icon, further details are shown, such as opening times. The app includes some use of client-side scripting.

Many art galleries have websites that can be found by search engines. White hat techniques and practices allow website developers to optimize the search process. It is good practice to maintain the source code of websites up-to-date with actual information.

Outline the functioning of this app. Include specific references to the technology and software involved.

With reference to the use on mobile devices, outline a feature of this application that may rely on client-side scripting.

State two metrics used by search engines.

Explain why maintaining a clean HTML source code of a website by removing old information optimizes the search process.

The evolution of the web, architectures, protocols and their uses has led to increasingly sophisticated services that run on peer-2-peer (P2P) architectures.

Explain how a P2P network can provide more reliability than a client-server model.

A non-profit organization, GreenAid, which operates in a less economically developed country (LEDC), uses old computers that have been donated to them. The organization relies on WiFi and satellite-based transmission for access to the Internet.

GreenAid is considering using a cloud-based service provided by a large IT company based in one of the more economically developed countries (MEDC).

Outline one technical challenge that GreenAid may encounter in setting up its own website with the technologies that it has available.

Explain how the use of cloud-based services may help GreenAid to overcome the limitations of the available technologies.

GreenAid wishes to improve its ranking in the major search engines.

Discuss whether GreenAid should use black hat search engine optimization techniques in order to improve its ranking.

Describe two problems this may cause for an organizsation such as GreenAid.

Sestra.com is a website maintained by a company who sell items made by local craftspeople.

The website is compatible with different screen sizes and formats ranging from desktop computers to mobile smartphones. All the site’s pages contain the following code fragment:

<link rel = 'stylesheet' href = '../css/default.css'>

Visitors to the site can search categories of products (for example “Toys”, “Bags”, “Dresses” etc.) selected from a drop-down menu. The menu is populated from the records stored in the CATEGORYtable of the site’s database.

Parts of the code of the file search.phpis shown below:

// Other code present here

// Other code present here

// Other code present here

The owners of the company have noticed that Sestra.com does not appear very prominently in search engine results.

The Sestra.com site includes:

Identify two ways that a cascading style sheet (CSS) can be used to ensure web pages are compatible with different screen sizes and formats.

Explain the processing this code enables on the server beforesearch.php is sent to the client.

Describe two ways in which the site developers could use white hat optimization to improve the site’s search engine ranking.

Distinguish between lossy and lossless compression.

Explain why the developers at Sestra.com would use lossless compression for the pdf documents.

Jackson City University has a Music Department that provides music lessons to students in a number of high schools in the city.

The Jackson City University Music Department teachers visit the different schools in the city to teach students a range of musical instruments.

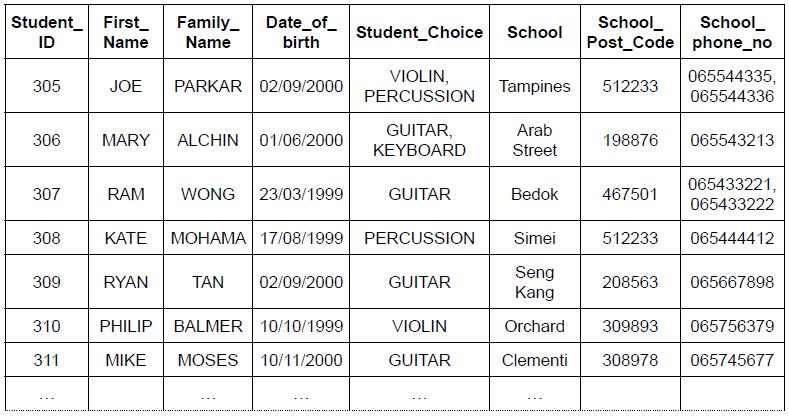

The following diagram shows an unnormalized table of student data.

Explain one benefit of normalizing a database.

Identify three ways that incorrect data could be prevented from being added into the School_phone_no field.

Outline what would be necessary to make the above unnormalized table conform to 1st Normal Form (1NF).

Construct the 3rd Normal Form (3NF) of the unnormalized relation shown above.

Explain the difference between 2nd Normal Form (2NF) and 3rd Normal Form (3NF).

HTTPS is not a distinct protocol, but makes specific use of a combination of two separate protocols.

Identify these two protocols.

Outline their functions.

Outline the interaction between a user, a web browser and a domain name server when accessing sites on the internet.

Describe the processing that takes place when the form that was sent from the client is received by the web server.

Explain, giving two reasons, why the use of server-side scripting promotes security when retrieving content from databases.

A museum has an online catalogue of its pieces. No new pieces have been acquired over the past 20 years, and the museum has already been at risk of closure for lack of funding. Its website consists only of static web pages.

It is suggested that the museum’s static web pages may be contributing to the museum’s lack of success.

Identify three differences between a static web page and dynamic web page.

Suggest two services, and the benefits that they would provide, if the museum were to redesign its website to be dynamic.

Explain how the provision of services on the museum’s website can increase its rankings in search engines.

It is also suggested that the museum’s basic catalogue be revised and upgraded with all of the museum’s pieces being catalogued using meta-tags.

Suggest how the use of meta-tags can be relevant for search engine optimization.

The code below is part of an XML (Extensible Mark-up Language) document that contains details of a DVD collection.

<collection> dvd> <title>The Hobbit</title> <genre>Fantasy</genre> <dvd> <title>Sleepless in Seattle</title> <genre>Romance</genre> /dvd>

<!--more DVDs entered here-->

</collection>

Identify the error in the section of XML code shown above.

Outline the meaning of this property in the context of XML code.

Describe one benefit of storing HTML formatting information in a CSS file.

Describe how these two protocols work together when sending data over the internet.

Explain how client-side scripting might be used on this login page before the page is sent to the web server of the shopping site. You should make reference to any software used.

Outline the benefit of using client-side scripting for the shopping site.

Explain how the use of scripts allows the user to change their profile photograph without reloading the complete page.

The IB Coordinator of AB World Academy introduces the Extended Essay to the Grade 11 students in January by researching the difference between primary and secondary data on the internet.

Some of the students used the Google search engine (Google.com) and others used the Ask search engine (Ask.com). These search engines gave results.

Google uses the PageRank algorithm and Ask uses the HITS algorithm.

The IB coordinator uploaded the assignments onto a cloud-based Learning Management Platform.

As part of their research, students downloaded images from the internet. Most of the downloaded JPG images were compressed using lossy compression.

Define the term search engine.

Distinguish between the principles of these two algorithms.

Describe the difference between cloud computing and local client-server architecture.

State the alternative type of compression to lossy.

Evaluate the advantages and disadvantages for students of using compressed images in their IB Coursework.

A PNG image uses open standards.

Distinguish between interoperability and open standards.